To turn a list of ngrams into a Document: doc = Document()ĭoc.add(Field( 'n-grams', ' '.join(ngrams),į,, )) FAISS (FAISS, in their own words, is a library for efficient similarity search and clustering of dense vectors. NumPy based - The cosine similarity function is written using NumPy APIs and then compiled with Numba. Return NGramTokenFilter(lower, self.minlength, self.maxlength) Pythonic - The cosine similarity function, written in pure Python using nested for loops, is compiled using Numba. Lower = ASCIIFoldingFilter(LowerCaseTokenizer(reader)) This one uses Lucene’s built-in n-gram filter: class NGramAnalyzer( Analyzer): '''Analyzer that yields n-grams for minlength <= n <= maxlength''' def _init_( self, minlength, maxlength):

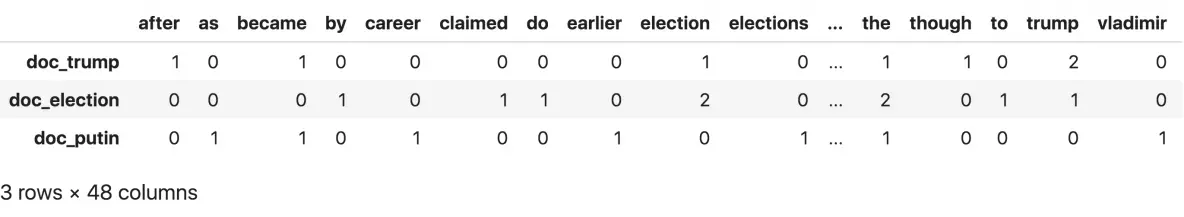

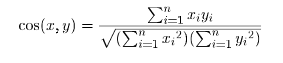

Lucene preprocesses documents and queries using so-called analyzers. In case you’re still interested in this problem, I’ve done something very similar using Lucene Java and Jython. ), ( 'foo bar bar black sheep', ), ( 'this is a sentence', )] Print "cosine =", cosine_sim(sentx, senty) Vectorized_corpus.append((i, vectorize(i, vocab)))Ĭorpus, vocab = corpus2vectors(all_sents)įor sentx, senty in product(corpus, corpus): Return for term in vocab] for doc,_ in enumerate(tfidf)]ĭef corpus2vectors( corpus): def vectorize( sentence, vocab): return Or pad_right to true in order to get additional ngrams: Use ingram for an iterator version of this function. """ return numpy.dot(u, v) / (math.sqrt(numpy.dot(u, u)) * math.sqrt(numpy.dot(v, v)))įor ngrams: def ngrams( sequence, n, pad_left= False, pad_right= False, pad_symbol= None): """Ī utility that produces a sequence of ngrams from a sequence of items. Returns the cosine of the angle between vectors v and u.

Check out NLTK package: it has everything what you needįor the cosine_similarity: def cosine_distance( u, v): """

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed